Research

In a bustling restaurant, even as faces vanish behind waiters and words are lost in the clatter of dishes, our brain seamlessly fills in the gaps, letting us follow the conversation and perceive the scene as if nothing were missing. How does the brain produce stable perception from evidence that is partial, shifting, or conflicting?

I study how the brain recruits memory to understand the world when sensory input is uncertain due to environmental noise or to sensory decline.

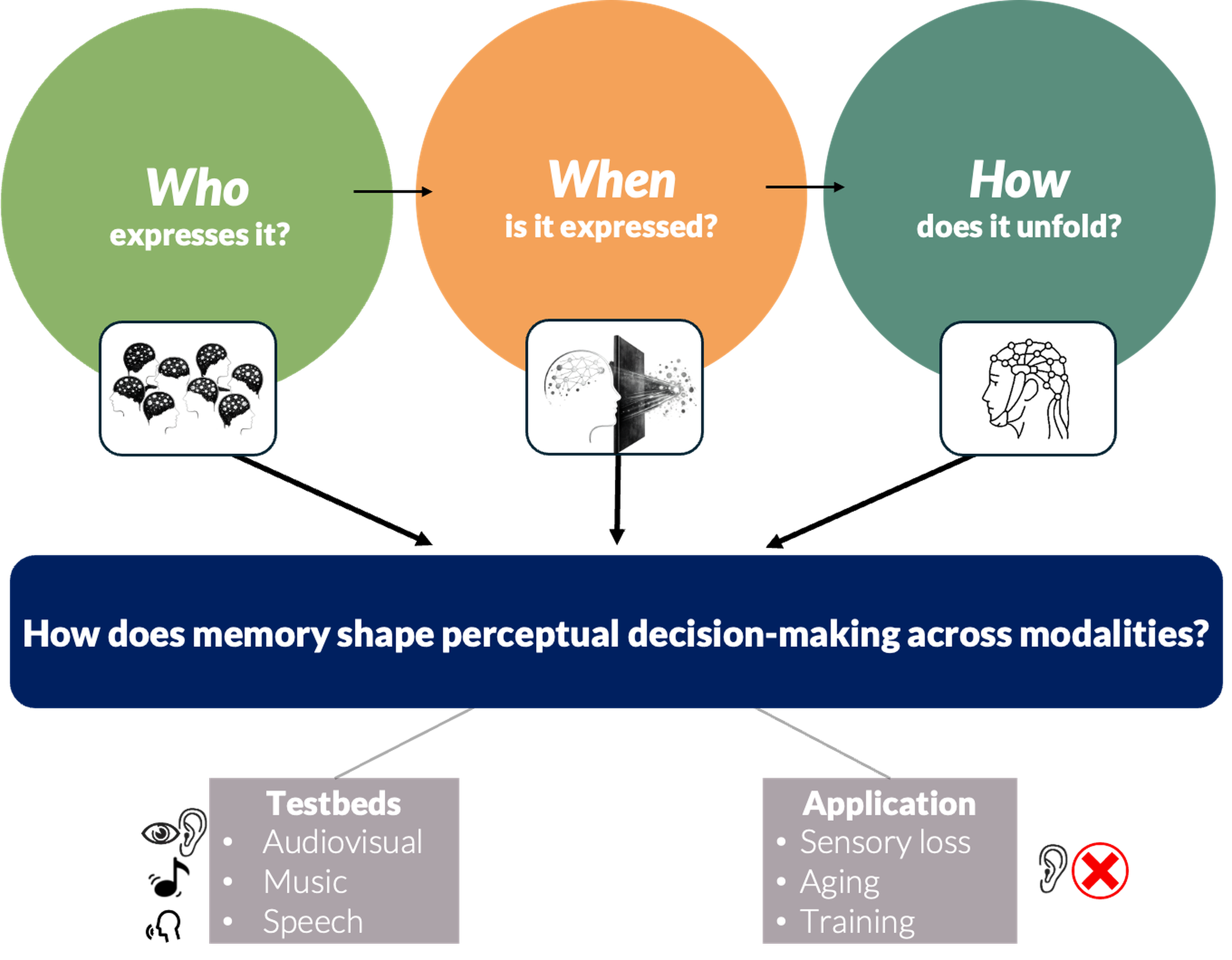

To address this question, I combine behavioural manipulations, electroencephalography (EEG), statistical modeling, and analyses of individual differences to determine WHO benefits most from memory-guided inference, WHEN memory reshapes perceptual processing, by identifying the stages at which it becomes behaviourally consequential, and HOW this influence unfolds through coordinated neural dynamics.

I use a diverse set of carefully curated testbeds—speech, music, and audiovisual tasks—to determine whether the brain uses unified, memory-guided predictive strategies to stabilize perception across modalities and levels of complexity.

The long-term goal of my research program is 1) to develop an amodal account of how memory shapes perceptual decision-making and 2) to leverage this framework to support perceptual inference in the face of age-related sensory decline.

1. Determining When Learning Shapes Perception

When does acquired knowledge guide real-time inference and when does it remain silent?

Learning does not guarantee its expression. I show that experience can leave robust neural traces that remain behaviourally silent, separating learning from its deployment in perception.

This dissociation raises two key questions. Is the transition from learning to expression gradual or thresholded? What mechanisms allow silent traces to be reawakened?

To answer them, my research combines controlled behavioural paradigms with high-temporal-resolution EEG to track memory formation and its influence on perception. By manipulating attention, goals, awareness, and context, I identify when prior experience shapes perception and how silent representations are reactivated.

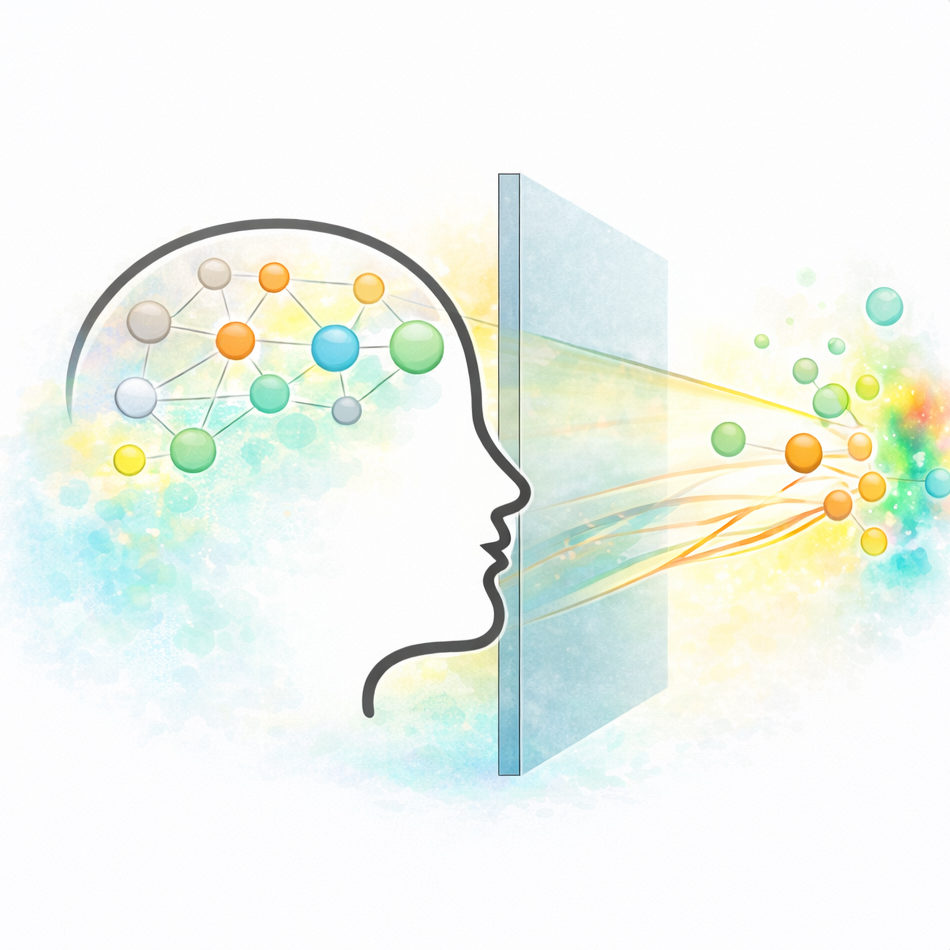

2. Dissociating Prediction from Postdiction in Perceptual Inference

Do predictive and postdictive influences reflect the same process, or fundamentally different forms of inference?

Context shapes perception both before input arrives and after additional information becomes available. In my work, I test whether predictive and postdictive influences reflect shared or distinct mechanisms, and demonstrate that they diverge in their temporal dynamics and behavioural consequences.

A genuine dissociation implies that anticipation and revision operate under different informational constraints and are differentially sensitive to timing and uncertainty. I therefore manipulate sensory reliability and temporal structure across audiovisual contexts to determine how contextual information is reweighted as evidence unfolds.

Clarifying these stage-specific constraints is central to building a unified account of how perceptual inference unfolds as evidence becomes available.

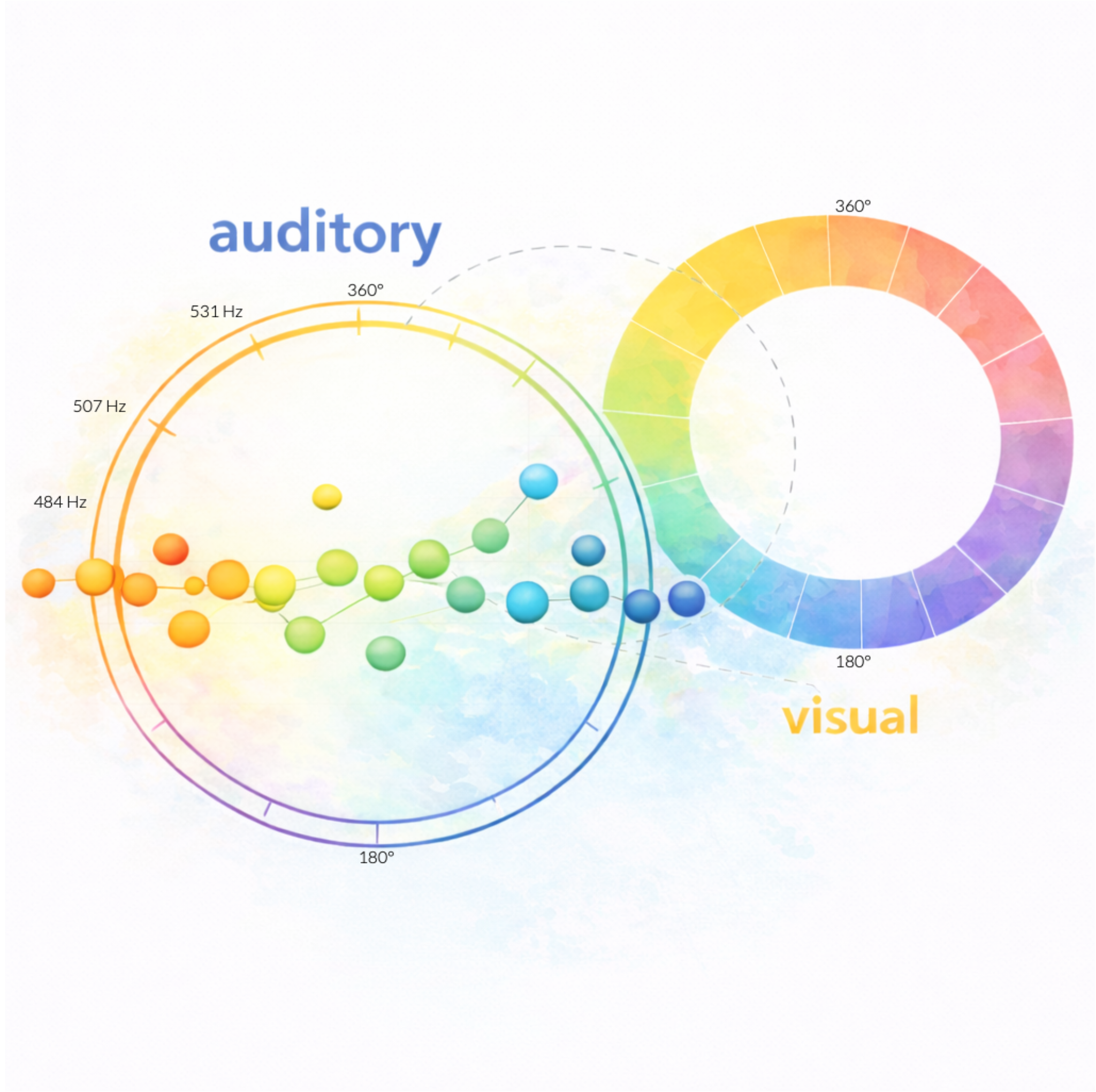

3. Aligning Feature Structure Across Modalities

Can a common feature space be established to test whether perceptual inference principles generalize across senses?

A principled comparison of perceptual inference across modalities rests on expressing sensory information within a shared feature space. In my work, I extend circular feature-space methods to align auditory and visual dimensions within a common continuous format, enabling direct comparison of precision and bias across senses.

This alignment strengthens cross-modal tests of perceptual inference by ensuring that observed differences reflect genuine modality-specific processes. Establishing a common feature space provides a principled foundation for building a unified account of perceptual inference across sensory domains.

4. Using Music to Test Principles of Perceptual Inference

How do acoustic cues and musical structure jointly constrain perceptual inference?

Music provides a powerful testbed for probing how sensory features and learned structure jointly shape perception. I dissociate bottom-up acoustic cues from experience-driven expectations and show that perceptual inference in complex auditory scenes reflects systematic cue weighting rather than dominance by either source alone. By examining how these influences interact, I test whether core principles of cue integration extend to richly organized auditory input.

5. Adapting and Strengthening Perceptual Inference Under Sensory Degradation

How can perception be supported when sensory input becomes unreliable?

When sensory input becomes unreliable, successful perception is constrained and must rely on internally stored knowledge to resolve ambiguity. In my work, I manipulate stimulus reliability to examine how the influence of prior knowledge changes as the signal degrades.

In parallel, I investigate voice familiarity as a form of stored knowledge that can be leveraged to improve speech intelligibility. I show that familiarity benefits depend on attention and cognitive resources, vary across individuals, and can be strengthened through targeted exposure.

By clarifying how prior knowledge supports perception under degraded conditions, this work provides a foundation for strengthening communication in aging and hearing-related decline.